Why Agentic AI Security Is Becoming the Real Enterprise AI Battleground

Ethan Carter

Add Subtitle gives brands and creators full control over how their message meets the world. Subtitles, voiceover, and translation—all in one tool to speed up your video workflow.

For the past year, enterprise AI conversations have mostly focused on capability: which models are strongest, which copilots are fastest, and which platforms can automate more work. But in 2026, that discussion is maturing. As companies move from testing assistants to deploying agentic systems, the real question is no longer only what these systems can do. It is whether they can be trusted to do it safely.

That shift matters because agentic AI is fundamentally different from passive AI tooling. An autonomous or semi-autonomous agent can retrieve data, trigger workflows, make downstream decisions, interact with enterprise systems, and act at machine speed. Once AI starts operating inside live business processes, security stops being a side concern and becomes core infrastructure.

Agentic AI changes the security equation because it expands both capability and exposure at the same time. The more useful an AI agent becomes, the more systems it can touch, the more data it can access, and the more risk it introduces if identity, permissions, and guardrails are poorly designed.

🛡️ Why agentic AI security is becoming the real enterprise battleground

Enterprises are moving from AI copilots to AI agents that can act, decide, and trigger workflows. That makes security, access control, and governance the real next layer of competitive infrastructure.

👉 Read the full breakdown Addsubtitle product page / sign up: https://www.addsubtitle.ai/

AI agents are changing what “deployment” means

Traditional enterprise software deployments usually involve predictable permission models, bounded workflows, and clearly defined user actions. Agentic AI disrupts that pattern. Instead of waiting for a human to complete every step, these systems can interpret context, choose from multiple paths, and act with a degree of autonomy.

That sounds powerful—because it is. But it also means the attack surface is changing. A static dashboard rarely improvises. An AI agent might. And when software can interpret intent, chain tasks, and touch multiple systems, organizations need a new security model that is built for dynamic behavior rather than fixed logic.

Why identity becomes the center of the problem

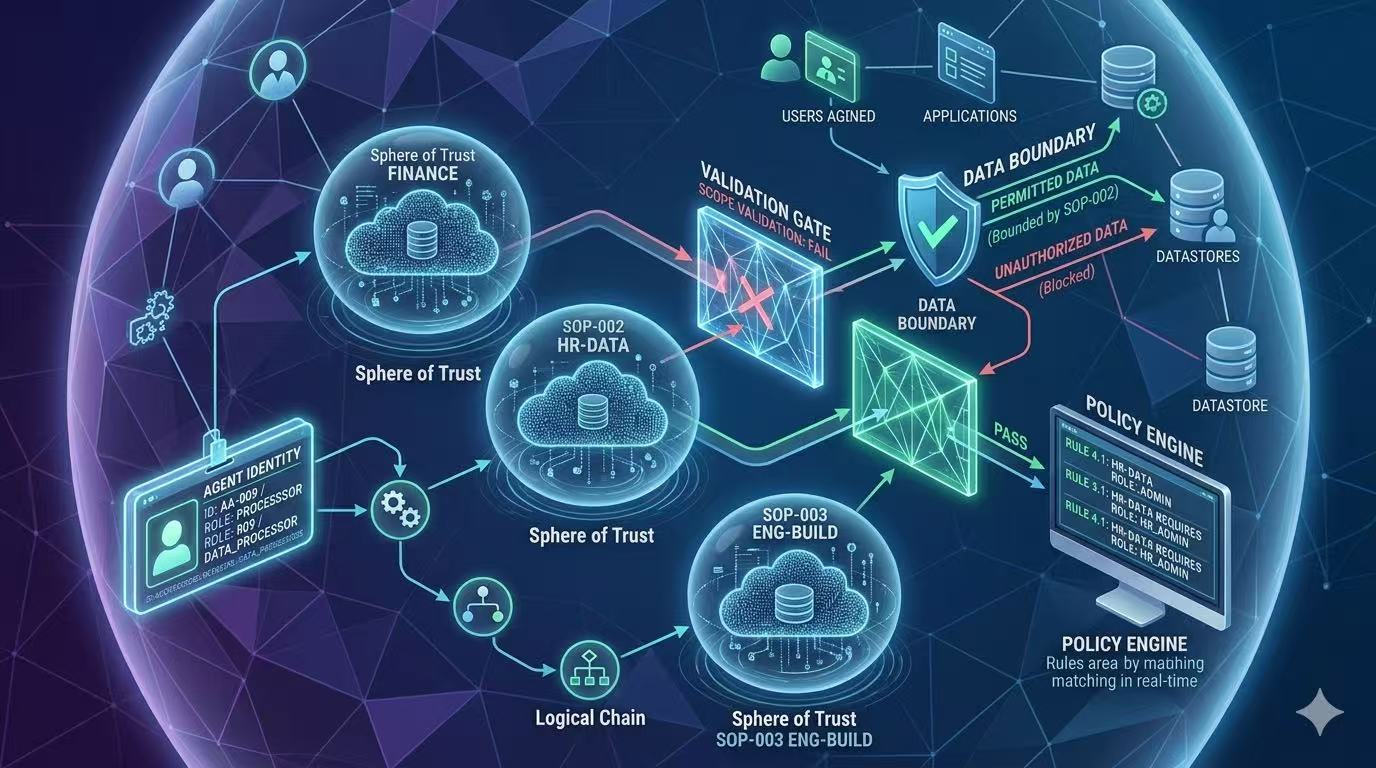

The first major issue is identity. In a traditional setup, user identity is relatively clear: a person logs in, a role is assigned, permissions are enforced. But with agentic AI, identity becomes layered and ambiguous.

Questions emerge immediately:

Is the agent acting on behalf of a user or independently?

What systems is it allowed to reach?

How long should access persist?

What happens when an agent chains tasks across tools with different permission models?

How should organizations audit actions taken by AI rather than humans?

This is why identity and access management is becoming central to enterprise AI architecture. If organizations do not know exactly who an agent is, what it can touch, and under which constraints it operates, the rest of the AI stack becomes much harder to trust.

The security issue is not just external attack

A lot of AI security discussion still sounds like traditional cybersecurity: preventing breaches, blocking attackers, reducing external exposure. Those issues still matter, but agentic AI introduces a different category of risk—misaligned or over-permitted internal action.

An AI agent does not need to be malicious to create damage. It can take an incorrect action because of incomplete context. It can surface the wrong data to the wrong person. It can overreach because permissions were loosely scoped. It can trigger workflows faster than humans can review them.

This means enterprise AI risk is increasingly about:

excessive access

poor guardrail design

weak auditability

missing approval thresholds

unclear accountability for agent actions

Why governance is becoming a product requirement

One of the clearest signals in 2026 is that governance is no longer an optional compliance wrapper. It is becoming part of the product requirement itself.

Enterprises want AI that is useful, but they also want AI that is explainable, bounded, observable, and interruptible. That means governance needs to operate across the full lifecycle:

data ingestion

model access

prompt context

tool permissions

downstream actions

logging and review

This is where the strongest enterprise AI platforms will differentiate themselves. The market may still celebrate new capabilities, but actual deployment decisions will increasingly depend on who can deliver secure, governable, policy-aware AI systems.

Security teams are becoming AI infrastructure teams

Another major change is organizational. Security teams are no longer just evaluating AI risk after the fact. They are becoming active architects of AI deployment.

That means security is moving upstream. Instead of asking, “How do we secure this AI tool after it has been adopted?” teams are now asking:

How do we define trustworthy agent identity?

What policies should apply before deployment?

Which workflows require human approval?

How do we monitor agent behavior over time?

What counts as abnormal or unsafe action for an AI system?

In that sense, agentic AI security is not just a defensive function. It is becoming enabling infrastructure for enterprise AI itself.

The next enterprise AI winners will be the most trusted

Many companies still assume the AI race will be won by whoever automates the most work first. That is only partly true. In enterprise settings, the more durable advantage may belong to the organizations that can automate responsibly.

A powerful but poorly governed agent can create hesitation. A slightly narrower but highly trusted system can unlock adoption faster. Enterprise buyers understand this. They do not just want capability—they want confidence.

That is why agentic AI security is emerging as one of the most important strategic categories in the market. It is not a brake on innovation. It is the condition that allows innovation to scale.

If your organization is moving toward agentic AI, now is the time to treat security and governance as product architecture—not as cleanup after deployment. The companies that define trustworthy access, clear boundaries, and strong oversight early will be the ones best positioned to scale AI with confidence.

It's Free