HappyHorse Is the New AI Video Tool Everyone Is Watching — But Subtitles Still Decide Whether Videos Perform

Ethan Walker

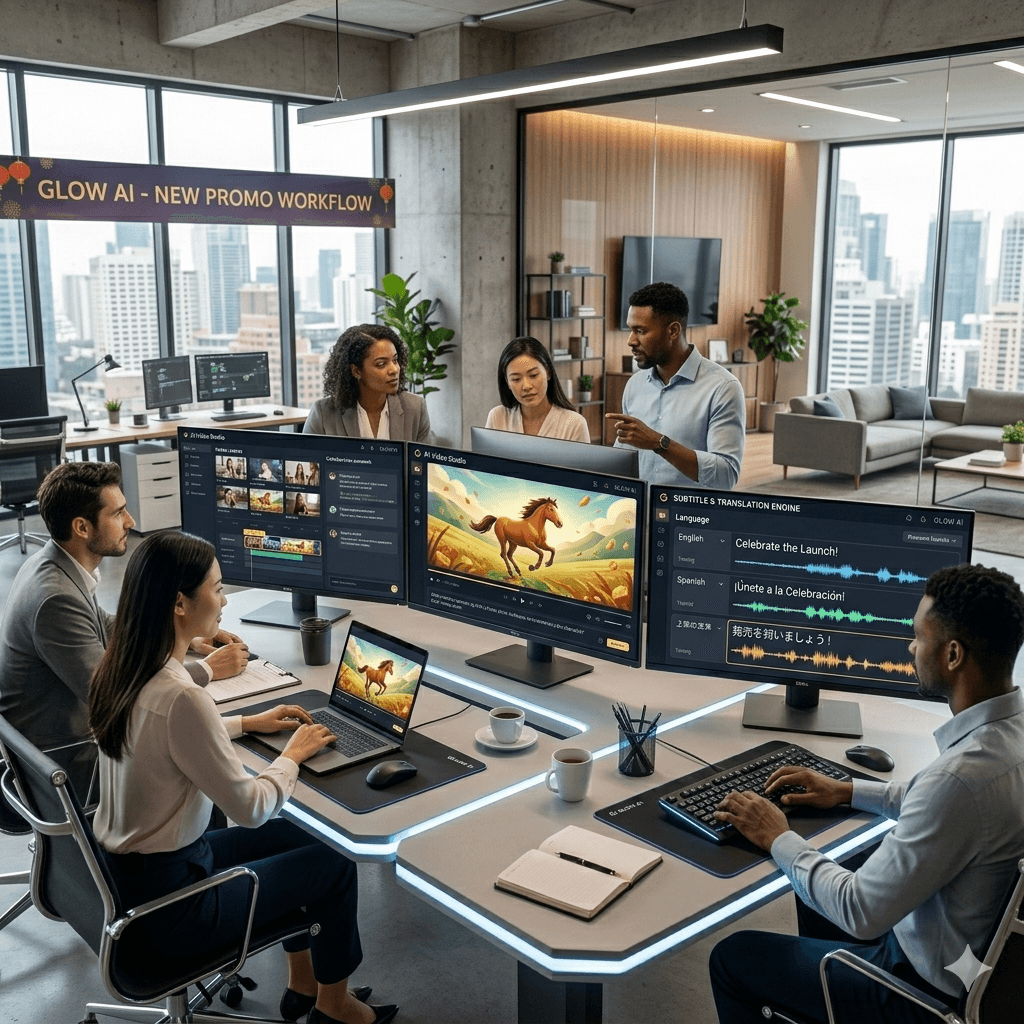

Add Subtitle gives brands and creators full control over how their message meets the world. Subtitles, voiceover, and translation—all in one tool to speed up your video workflow.

HappyHorse is one of the newest names drawing attention in AI video.

According to its public product pages, HappyHorse is designed for fast video creation workflows and supports both text-to-video and image-to-video generation, with 1080p output positioned for ads, social clips, product visuals, and brand content. Its messaging is not about experimental demos alone, but about turning prompts into video assets that are closer to publish-ready work.

The recent surge in attention is not random. Artificial Analysis shows that HappyHorse-1.0 was added to the leaderboard within the last month and currently sits at the top of the Text to Video leaderboard (No Audio), which is based on blind user preference voting.

That makes HappyHorse an interesting story for content teams. But it also highlights a bigger point: creating AI video faster does not automatically make it easier to understand, localize, or distribute.

The newest AI video tools are getting better at generating visuals, motion, and polished-looking scenes. HappyHorse’s public positioning emphasizes prompt-based video generation, image-based video generation, natural motion, and more stable scene continuity, all of which matter for teams producing marketing and social content at speed.

That progress is important. But in real publishing environments, performance depends on more than generation quality. Videos still need to be understandable without sound, easier to review internally, and easier to adapt for multiple markets. This is exactly where subtitle workflows become valuable for teams using new AI video tools.

Headline: Turn AI-Generated Videos Into Publish-Ready Content

Body: HappyHorse can help teams create videos faster. AddSubtitle helps make those videos clearer, more accessible, and easier to distribute with subtitles and multilingual workflows.

Button Text: Visit AddSubtitle

Link: https://www.addsubtitle.com/

Secondary Button Text: Sign Up

Secondary Link: https://www.addsubtitle.com/sign-up

Why HappyHorse Is Getting Attention

HappyHorse is being discussed because it lands at the intersection of two things teams care about most right now: video quality and workflow speed.

Its public product pages position it around several practical capabilities:

1080p output

text-to-video

image-to-video

more natural motion

stronger scene continuity

faster creative iteration

That matters because many teams are not looking for AI video tools just to test prompts. They want tools that can help them move faster on product marketing, ad concepts, social video, and campaign content. HappyHorse explicitly frames itself around those use cases.

The Bigger Story Is Workflow, Not Just Generation

The most interesting part of the HappyHorse story is not only that it is new. It is that it reflects a larger market shift.

Teams increasingly want AI tools that fit real production workflows. HappyHorses describes HappyHorse as part of a broader SaaS workflow where users can generate, preview, and iterate in one platform, rather than manage separate infrastructure themselves. The site also states that users do not need to deploy or manage their own model stack.

That is a strong signal for where the category is moving: from “look what AI can generate” to “how quickly can a team turn this into usable content?”

Why Subtitles Still Matter After the Video Is Generated

Even when AI video generation improves, one problem remains unchanged: audiences still need help understanding the content.

Subtitles matter because they help with:

silent viewing on social platforms

accessibility

multilingual distribution

clearer messaging in short-form video

faster internal review and editing decisions

For teams using AI video tools, subtitles are not just a finishing touch. They are part of what makes a generated video usable in the real world.

HappyHorse Can Generate the Video — AddSubtitle Helps It Travel Further

HappyHorse’s strengths appear to be in generation: turning prompts or images into polished video outputs and speeding up early-stage production.

But once a team wants that video to perform across markets, subtitle tooling becomes the next practical layer.

That is where AddSubtitle fits naturally:

add subtitles quickly

improve comprehension

prepare videos for multilingual audiences

make short-form content easier to follow

help generated content feel closer to publish-ready

In other words, AI video generation helps create the asset. Subtitle workflows help finish the asset for actual distribution.

The New AI Video Stack Is About Usability

Artificial Analysis says its video rankings are based on blind comparisons using an Elo system derived from user votes, which makes HappyHorse’s current leaderboard position especially notable.

But even if a model rises fast, long-term adoption will still depend on usability.

That means:

how fast teams can iterate

how clearly the output communicates

how easy the content is to localize

how quickly the result can move into production

The AI video tools that win attention may be new. The tools that win adoption will be the ones that fit complete publishing workflows.

If your team is experimenting with new AI video tools like HappyHorse, do not stop at generation.

Use AddSubtitle to make AI-generated videos clearer, easier to watch, and better prepared for real distribution.

It's Free