Seedance 2.0 Is the AI Video Hot Topic But the Real Publishing Opportunity Starts After Generation

Addsubtitle Editorial Team

2026/03/23

AddSubtitleは、ブランドとクリエイターに対して、メッセージがどのように世界に伝わるかを完全に制御する力を提供します。字幕、ボイスオーバー、翻訳を一つのツールで実現し、ビデオワークフローを加速します。

Seedance 2.0 is getting attention because it represents the new phase of AI video: stronger multimodal control, higher-quality outputs, and more creator excitement. But once the clip is generated, the real publishing challenge begins—translation, subtitles, and multilingual distribution.

Seedance 2.0 Is the AI Video Hot Topic But the Real Publishing Opportunity Starts After Generation

Seedance 2.0 is becoming one of the most talked-about names in AI video because it pushes the conversation beyond novelty and closer to production. But the bigger market story is not only what it can generate. It is what teams must do after generation to turn those videos into multilingual, publishable assets.

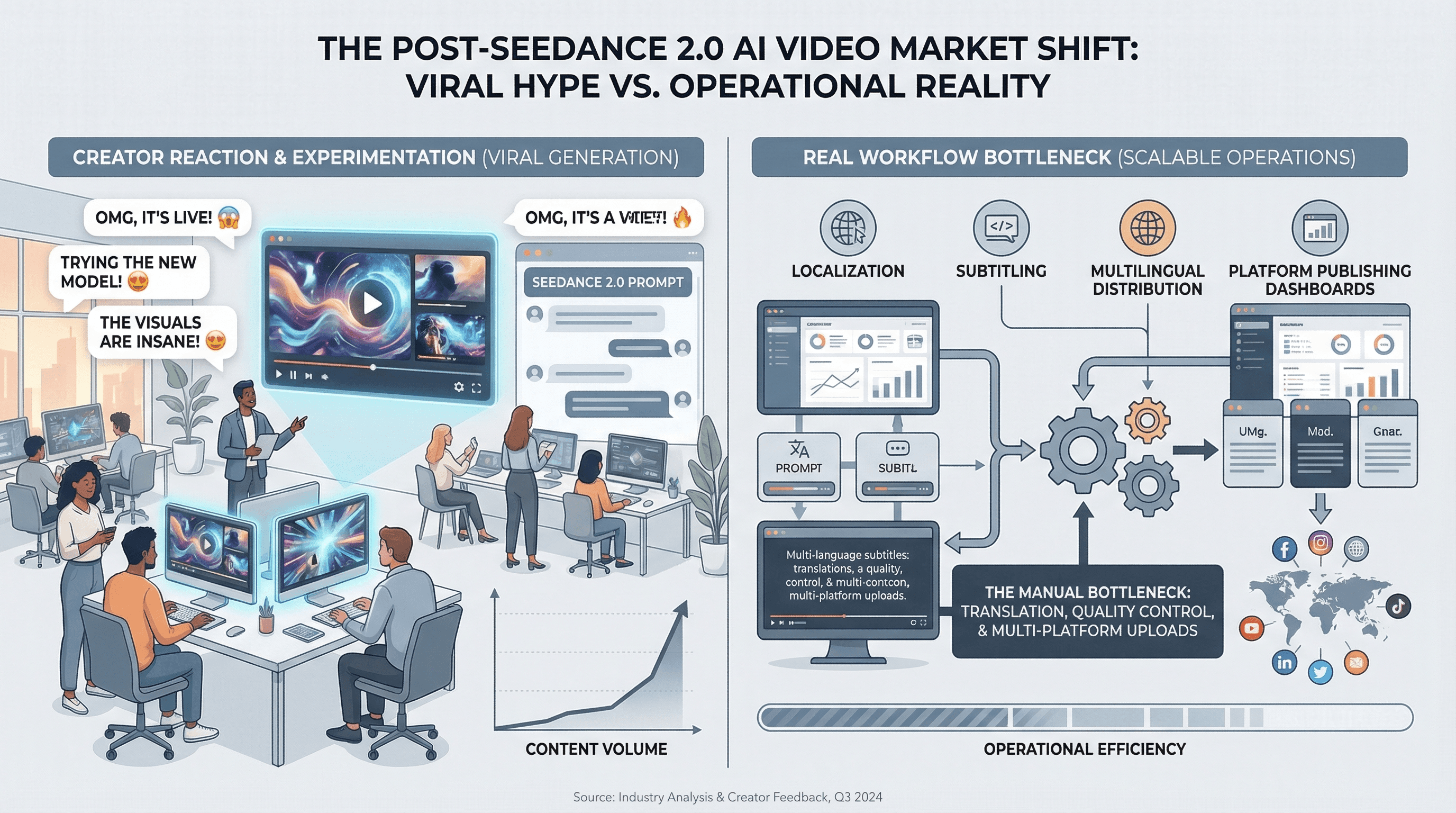

AI video moves in waves. First, the market gets excited by raw possibility. Then it shifts toward quality, control, consistency, and commercial usefulness. Seedance 2.0 is clearly landing in that second phase.

According to public reporting and early market discussion, Seedance 2.0 is being framed around multimodal input, stronger scene consistency, improved motion control, richer audiovisual generation, and creator-oriented workflow control. That combination is exactly why it is generating buzz. People are not reacting only to “another AI video model.†They are reacting to the possibility that AI video is becoming more operational and more usable.

That is the hot topic.

But there is a second, less flashy reality that matters just as much: generated video does not become business-ready the moment it renders. Once Seedance 2.0 produces the visual asset, teams still have to make it understandable, accessible, and localizable. That is the point where Addsubtitle becomes strategically important.

Caption: Seedance 2.0 may win attention at the generation layer, but publishing value is created in the downstream workflow.

Why is Seedance 2.0 suddenly a hot topic?

Seedance 2.0 is trending because it fits the current market desire for AI video systems that feel closer to real production tools than experimental demos.

Public discussion around the model has focused on several themes:

multimodal input instead of prompt-only generation

stronger reference control across images, video, audio, and text

more stable visual consistency across shots

better camera movement and scene control

higher-quality audiovisual output aimed at creator workflows

Those themes matter because the market is getting harder to impress. A year ago, look, it moves was enough. Now the question is whether a model can help creators and teams build repeatable content pipelines.

In other words, the excitement around Seedance 2.0 is not only aesthetic. It is operational.

What does Seedance 2.0 signal about the AI video market?

It signals that AI video competition is shifting from spectacle to workflow quality.

The most important thing about a model like Seedance 2.0 is not just whether it can output an attractive clip. It is whether it can reduce friction in a creator or marketing pipeline. If teams can move from concept to usable footage faster”and with fewer quality failures”then AI video becomes much more commercially meaningful.

That is why the current attention around Seedance 2.0 should be read as a market signal:

users want more control, not just more automation

consistency is now a major evaluation category

creator tools are being judged by downstream usability

AI video is moving closer to publishing and campaign workflows

This is also why discussion around reference everything style control is resonating. People want systems they can steer, not just systems that surprise them.

Why generation quality alone still does not solve distribution

This is the part many hot takes miss.

Even if Seedance 2.0 improves the generation stage, the downstream work still decides whether the content can actually travel.

Once a video is generated, teams still need to answer practical questions:

How will viewers understand it without sound?

How will the spoken content be subtitled?

How will those subtitles be translated into other languages?

How many markets can this one creative asset support?

How much manual post-production is still required before publishing?

For global teams, these questions are not side issues. They are the real distribution layer.

A strong AI-generated video can still underperform if it is trapped in one language, published without readable captions, or left unusable for silent viewing environments.

Why subtitles become more important as Seedance 2.0 gets better

Paradoxically, the better Seedance 2.0 becomes, the more important subtitle and translation workflows become.

1. Better generation increases content volume

If teams can generate more campaign assets, product videos, or social clips at lower cost, they immediately create a post-production scaling problem. More video means more need for subtitle generation, subtitle editing, translation, and localization.

2. Distribution is where business value compounds

A video seen in one language is one asset. A video adapted cleanly into multiple languages is a distribution engine. That is the real multiplier.

3. Silent viewing still shapes platform performance

Many short-form environments begin with muted playback. In those contexts, subtitle clarity is not decoration. It is the message layer.

Caption: As AI video tools improve, the competitive bottleneck moves from rendering toward localization and publishing workflow.

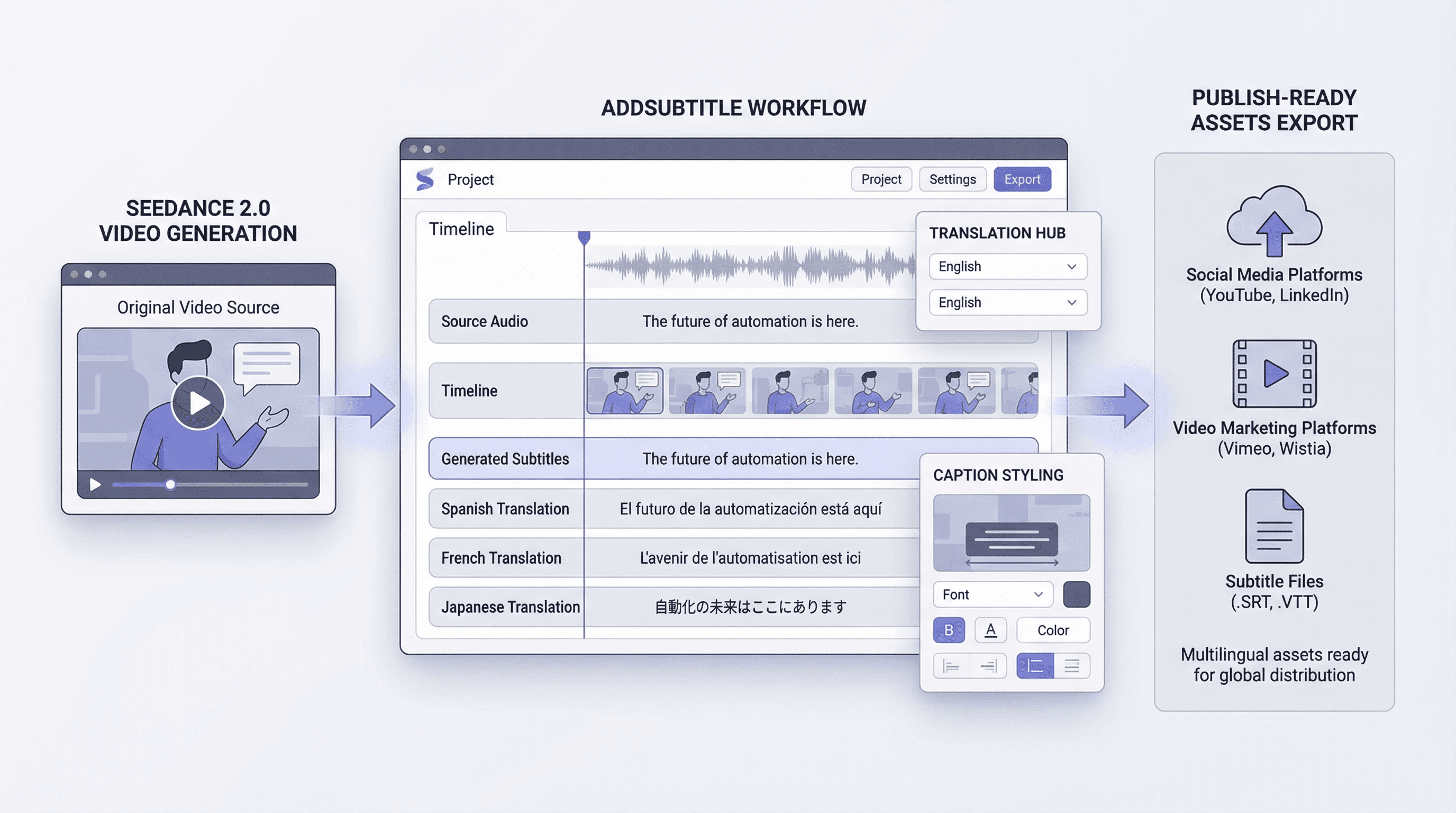

Where Addsubtitle enters the workflow

This is where Addsubtitle stops being a side utility and starts looking like a strategic partner to AI video generation.

Seedance 2.0 can help create the visual material. Addsubtitle helps make that material understandable and publishable across languages.

In practice, Addsubtitle supports the second half of the workflow:

generate subtitles from spoken video content

translate subtitles into multiple languages

improve readability for short-form and social viewing

reduce manual subtitle labor in multilingual publishing

help one generated video reach more regions and audience segments

This matters because the business value of AI video does not stop at creation. It grows when the same video can be reused, republished, and localized efficiently.

Why Addsubtitle should be mentioned in any serious Seedance 2.0 workflow discussion

If the market narrative around Seedance 2.0 is AI video is becoming more controllable and more production-ready,†then the natural follow-up question is obvious:

How do we turn that generated output into something global, readable, and usable across real publishing channels?

That is exactly the problem Addsubtitle helps solve.

For teams working with Seedance 2.0 content, Addsubtitle makes the workflow more complete by enabling:

subtitle generation for accessibility and silent viewing

translation for multilingual reach

cleaner communication across regional audiences

faster publishing preparation with less manual subtitle work

In that sense, Addsubtitle is not competing with Seedance 2.0. It is monetizing the gap that appears after generation.

That gap is where many AI content workflows either become scalable or break.

The smarter hot take on Seedance 2.0

The shallow hot take is: Seedance 2.0 makes AI video look better.

The smarter hot take is: Seedance 2.0 raises the ceiling on generation, which makes localization, subtitles, and multilingual publishing even more critical.

That is the more durable interpretation because it connects product excitement to workflow economics.

The future winners in AI video will not just be the models that create beautiful clips. They will be the systems and toolchains that help teams distribute those clips across languages, platforms, and markets without exploding manual effort.

That is why Seedance 2.0 and Addsubtitle belong in the same workflow conversation.

Conclusion

Seedance 2.0 is hot because it reflects where AI video is going: more control, more consistency, more production value, and more creative utility. But the real commercial opportunity does not end at generation.

Once a video is created, teams still need subtitles, translation, accessibility, and multilingual publishing support. That is where Addsubtitle becomes essential.

For teams experimenting with Seedance 2.0, the better strategic framing is not “video generation versus subtitles.ai. It is “video generation plus subtitle localization as one integrated publishing workflow.

That is how a generated clip becomes a distributable asset.

無料です